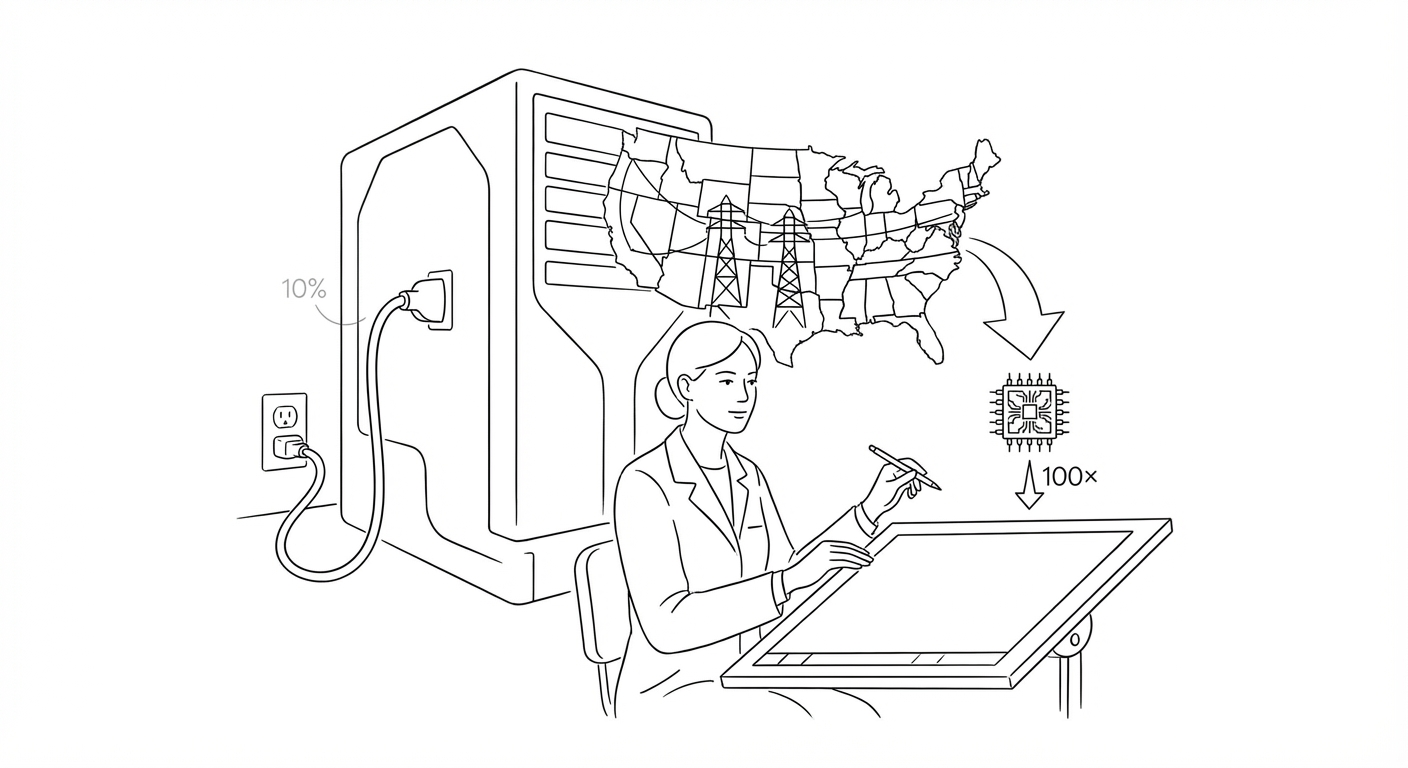

AI Energy Demand Hits 10% of U.S. Grid as Efficiency Research Targets 100x Reduction

Data centers consumed 415 terawatt-hours in 2024, with demand projected to double by 2030. Researchers propose neuro-symbolic systems to slash consumption while improving accuracy.

Artificial intelligence systems and data centers consumed more than 10 percent of total U.S. electricity production in 2024, according to International Energy Agency figures cited by researchers at Tufts University, who warn that demand is on track to double by 2030.

The 415 terawatt-hours consumed last year have prompted a wave of efficiency research. Engineers at Tufts unveiled a proof-of-concept neuro-symbolic AI system in early April 2026 that combines neural networks with symbolic reasoning, claiming the hybrid approach could reduce energy consumption by up to 100 times while improving task performance. The system helps robots "think more logically instead of relying on brute-force trial and error," according to the university's announcement.

Separately, researchers at the Japan Advanced Institute of Science and Technology developed a retrieval-augmented generation system for architectural imaging that produces detailed building renderings from text prompts by first generating a sketch, then augmenting it with components from a database before final rendering. The staged approach aims to reduce computational waste inherent in end-to-end generative models.

The infrastructure buildout is creating acute financing and insurance challenges. Global AI data center spending could reach 7 trillion dollars by 2030, McKinsey estimates, with individual deals routinely exceeding 10 billion dollars. An 8.5 billion dollar GPU-backed loan to CoreWeave introduced novel credit structures, while insurers are adapting bespoke policies to cover risks that traditional underwriting frameworks do not address.

(Microsoft introduced multi-agent orchestration features for Copilot in early April, routing queries through OpenAI's GPT for content generation and Anthropic's Claude for fact-checking. The "Council" tool allows users to compare outputs side-by-side, part of the company's effort to reduce hallucinations in enterprise deployments.)

The energy crunch arrives as practical applications proliferate. Pratik Desai, a 34-year-old technologist, built an LLM-assisted workflow in early 2026 to manage his mother's Stage 4 cancer care, ingesting daily Epic system exports into NotebookLM and Claude to spot diagnostic errors and detect emergencies. Meanwhile, Adobe polling found 26 percent of U.S. taxpayers now use AI chatbots for tax preparation, despite research showing elevated error rates in financial contexts.

The sustainability question is colliding with market dynamics. Tech stocks have declined 11 percent on average year-to-date amid broader pullback over geopolitical tensions and investor reassessment of AI economics. Some wealth managers are advising clients to "buy the dip," while fee-based advisors view the correction as healthy market clearing. The tension reflects uncertainty over whether efficiency gains can keep pace with deployment ambitions.

Keywords

Sources

http://www.sciencedaily.com/releases/2026/04/260405003952.htm

Tufts neuro-symbolic AI proof-of-concept claims 100x energy reduction through logic-driven reasoning over brute-force computation.

https://www.forbes.com/sites/quickerbettertech/2026/04/04/small-business-technology-news-salesforce-rolls-out-a-major-ai-upgrade-for-slack/

JAIST architectural imaging system and Microsoft Copilot multi-agent orchestration as practical efficiency and accuracy improvements.

https://letsdatascience.com/news/ai-data-centers-strain-insurers-capacity-7e9eb853

Infrastructure financing stress as AI data center deals reach $10B+ scale with novel GPU-backed credit structures.

https://letsdatascience.com/news/pratik-desai-builds-ai-workflow-for-cancer-care-e5743855

Caregiver-driven clinical oversight workflow using Epic exports and LLMs to detect diagnostic errors in Stage 4 cancer treatment.