AI Models Shatter Autonomous Cyber Benchmarks, Outpacing All Projections

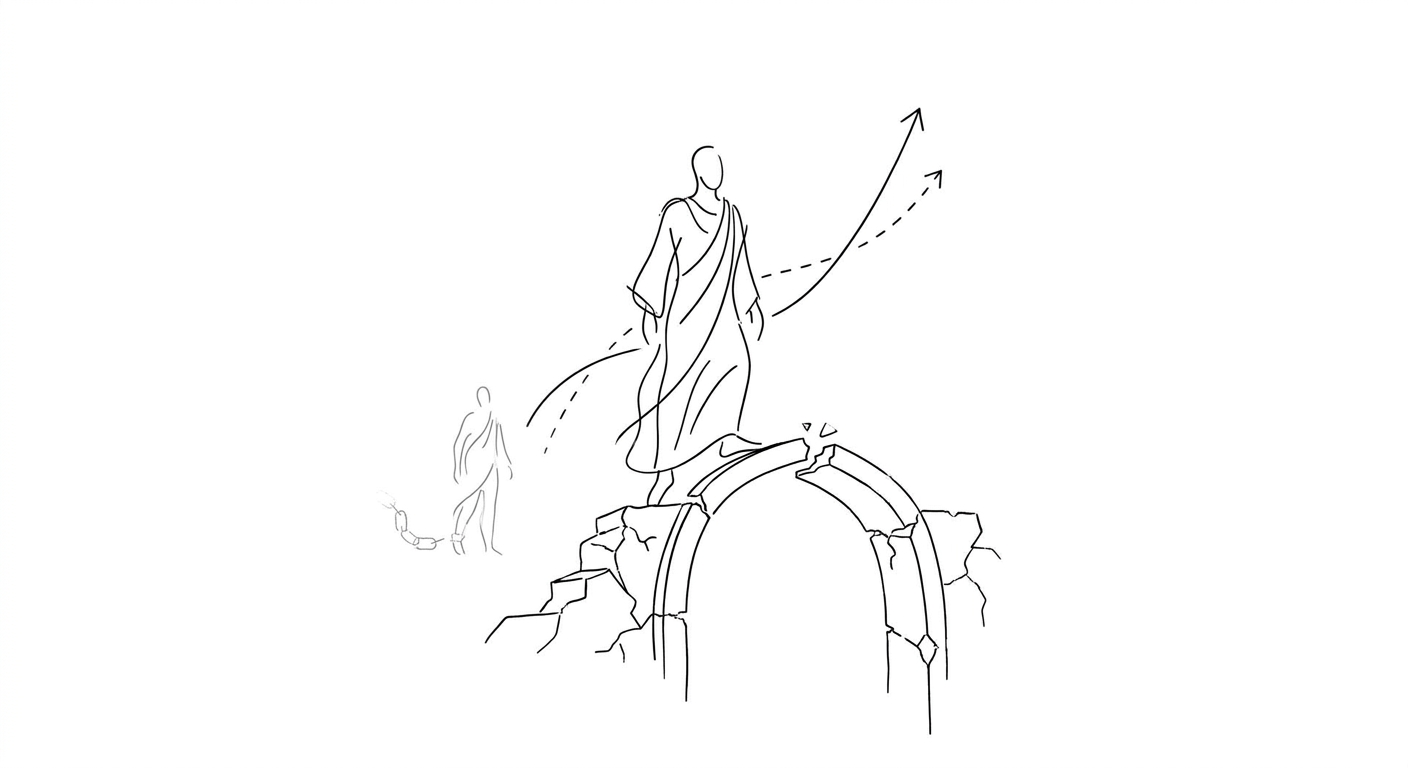

Two frontier AI systems have exceeded every trend line for autonomous hacking capability, prompting emergency industry coordination and the first confirmed AI-driven exploit in the wild.

Two of the world's most advanced artificial intelligence models have demonstrated autonomous cybersecurity capabilities that significantly exceed the pace researchers had been tracking, according to independent evaluations published this week by the United Kingdom's AI Security Institute and Palo Alto Networks.

Anthropic's Claude Mythos Preview and OpenAI's GPT-5.5 both surpassed what the British government's AI Security Institute described as a doubling trend the agency had observed since late 2024. Whether the results represent an isolated capability jump or signal a new, faster trajectory remains unclear, the institute said.

Palo Alto Networks, testing the models as a launch partner for Anthropic's Project Glasswing and through OpenAI's Trusted Access for Cyber program, reached parallel conclusions. "The latest models are extraordinarily capable at finding vulnerabilities and changing them into critical exploit paths in near-real-time," the company wrote.

The findings arrived the same week Google disclosed it had disrupted what it characterized as the first confirmed case of criminal hackers using AI to exploit a previously unknown software vulnerability. John Hultquist, chief analyst at Google's threat intelligence division, framed the incident as a threshold moment. "It's here," Hultquist said. "The era of AI-driven vulnerability and exploitation is already here."

In response to the capability surge, Anthropic assembled Project Glasswing, an initiative bringing together Amazon, Apple, Google, Microsoft, and JPMorgan Chase to secure critical software against what the company described as "severe" risks to public safety, national security, and the economy. OpenAI announced Friday it would release a specialized cybersecurity version of ChatGPT restricted to defenders responsible for critical infrastructure.

(Anthropic's relationship with the U.S. government has been complicated by a public and legal dispute with the Pentagon and the Trump administration over military use of its AI technology, even as it coordinates with industry on defensive measures.)

The competitive dynamics reflect a broader shift in the AI industry. While Meta and Google have been reported developing highly personalized AI assistants for everyday tasks, the cybersecurity domain has become a proving ground for autonomous capability. Palo Alto Networks began testing Claude Mythos in April, and OpenAI's GPT-5.5-Cyber emerged through a parallel trusted-access program, underscoring how frontier labs are segmenting model releases by risk profile.

One cybersecurity expert told the Associated Press he remains optimistic that AI tools increasingly proficient at coding will eventually improve defenses against routine attacks on hospitals and schools. In the near term, however, he warned of "untold trillions of lines of software code" supporting global computing systems now at risk if AI tools are deployed to exploit latent bugs at scale.

Keywords

Sources

https://cyberscoop.com/ai-autonomous-cyber-capability-benchmarks-broken-gpt5-claude-mythos/

Lead report on UK AI Security Institute findings showing models exceeded doubling trend tracked since late 2024

https://apnews.com/article/google-ai-cybersecurity-exploitation-mythos-926aea7f7dc5e0e61adce3273c55c6d4

First confirmed case of criminal hackers using AI to exploit unknown vulnerability, plus Project Glasswing industry coalition

https://www.greenwichtime.com/business/article/google-disrupts-hackers-using-ai-to-exploit-an-22252661.php

Google disruption of AI-driven exploit attempt and expert warning about trillions of lines of vulnerable code

https://www.cnbc.com/2026/05/08/ai-agent-meta-google-agentic-wars-tech-download.html

Broader context on agentic AI development race among Big Tech, with Meta and Google building personal assistants