Amazon's Trainium Chip Wins Major Clients as Liquid Cooling Becomes Industry Standard

AWS opens its chip development lab to showcase Trainium3's liquid-cooled architecture, part of a $50 billion OpenAI deal that also includes Anthropic and Apple as customers.

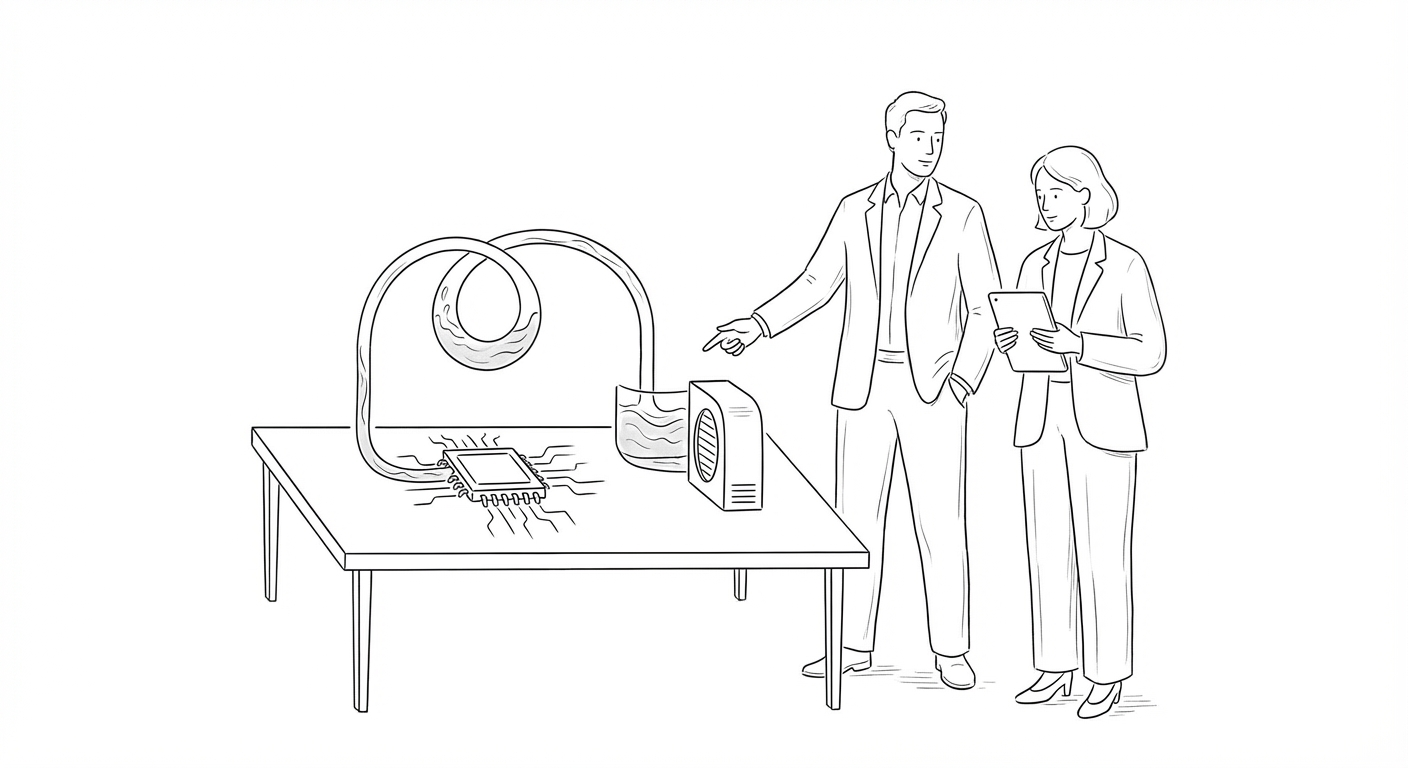

Amazon Web Services has opened the doors to its chip development lab, revealing the infrastructure behind a $50 billion investment deal with OpenAI and partnerships with Anthropic and Apple. The facility houses rows of servers equipped with liquid-cooled Trainium3 processors, Graviton CPUs, and Amazon Nitro chips, all running on a closed-loop cooling system designed to reduce environmental impact.

The tour, conducted shortly after AWS CEO Andy Jassy announced the OpenAI agreement, represents Amazon's most aggressive push yet to challenge Nvidia's dominance in AI infrastructure. AWS engineer Carroll told TechCrunch that migrating existing AI workloads to Trainium requires "basically a one-line change, and then recompile, and then run on Trainium," positioning the chip as a drop-in replacement for developers already locked into Nvidia's ecosystem.

AWS has also integrated Cerebras Systems' inference chip onto servers running Trainium, promising what Amazon describes as "superpowered, low-latency AI performance." The move signals a broader industry shift toward specialized inference hardware as AI deployment accelerates beyond model training.

(Amazon funded the majority of TechCrunch's travel expenses for the lab tour, which took place at an undisclosed data center location.)

The Trainium3 architecture marks a departure from traditional air-cooled server designs that have dominated data centers for decades. Hardware development engineer David Martinez-Darrow demonstrated maintenance procedures on the multi-sled UltraServer configuration, which sandwiches Neuron network switches between compute sleds. The liquid cooling system recirculates coolant in a closed loop, addressing both thermal efficiency and water consumption concerns that have plagued hyperscale AI deployments.

Amazon's chip ambitions arrive as the AI hardware landscape fragments along geopolitical and architectural lines. The company's ability to secure major customers like OpenAI, Anthropic, and Apple suggests that software compatibility—not raw performance—may determine the next generation of AI infrastructure winners.

Keywords

Sources

https://techcrunch.com/2026/03/22/an-exclusive-tour-of-amazons-trainium-lab-the-chip-thats-won-over-anthropic-openai-even-apple/

Exclusive lab tour reveals liquid-cooled Trainium3 architecture and one-line code migration strategy to challenge Nvidia

https://www.reuters.com/world/china/huaweis-new-ai-chip-find-favour-with-bytedance-alibaba-which-plan-place-orders-2026-03-27/

Huawei's 950PR chip targets inference workloads with easier software migration, mirroring Amazon's compatibility strategy

https://www.reuters.com/business/arm-jumps-new-ai-chip-drive-billions-annual-revenue-2026-03-25/

Arm's AGI CPU launch for agentic AI reflects industry-wide pivot to inference and autonomous agent workloads

https://www.ft.com/content/23031c83-7a4a-4a59-a30f-a98900064504

Server demand for AI inference strains component supply chains as Intel and AMD prioritize data center capacity