Dell Pivots to 'AI Factories' as Hyperscalers Pour $710 Billion Into Infrastructure

Once a commodity server vendor, Dell now positions itself as trusted advisor for integrated AI systems, deploying to 4,000 customers as cloud giants race to build agentic computing capacity.

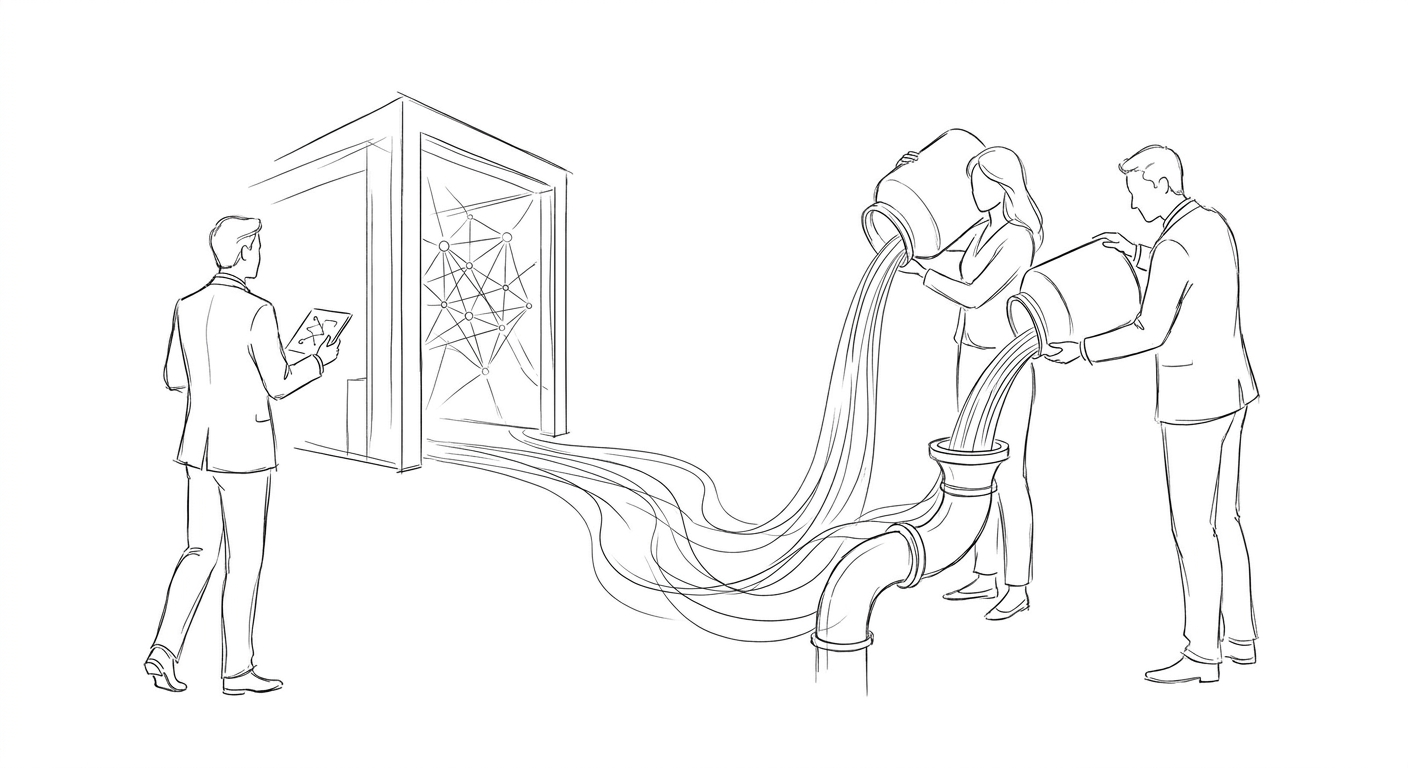

Dell Technologies has transformed from a commodity hardware supplier into what it calls an "AI factory" integrator, deploying complete AI infrastructure systems to more than 4,000 customers in partnership with Nvidia. The company reported AI server sales more than doubled from approximately $10 billion in early 2025 to $25 billion in February 2026, with $50 billion projected for the current year.

"We've gone from an infrastructure provider to a trusted advisor for the most important workload we've seen in recent history," says Arthur Lewis, president of Dell's Infrastructure Solutions Group. The shift reflects a broader industry pivot toward integrated systems combining accelerated computing, networking, storage, and software rather than standalone components. Customers now include Lowe's, McLaren, and cloud infrastructure provider CoreWeave.

The transformation comes as Amazon, Microsoft, Google, and Meta collectively plan to spend roughly $710 billion this year on AI infrastructure capital expenditures, according to financial disclosures. Amazon leads at approximately $200 billion, followed by Microsoft at $190 billion, Alphabet at $185 billion, and Meta at $135 billion. These hyperscalers are no longer building data centers simply to support chatbots but preparing for autonomous AI agents, enterprise copilots, robotics systems, and AI-powered search products requiring exponentially more computing infrastructure than earlier cloud workloads.

Nvidia reported data-center revenue surging 75% year over year to $193.7 billion in its February earnings, driven by demand from hyperscalers deploying Hopper and Blackwell AI systems. The company posted quarterly net income of $43 billion, up 94% from a year earlier, cementing its position as the dominant supplier of essential hardware throughout the AI buildout. Its CUDA platform remains deeply embedded across enterprise AI workloads, making ecosystem switching costly for customers.

Qualcomm is threading AI capabilities across devices from smartphones to data centers, unveiling its Snapdragon Wear Elite chip for smartwatches in March with a dedicated neural processor. Its AI200 accelerator, shipping later this year, takes the same inference architecture into the server rack. The ambition is to move more AI processing onto the device itself rather than sending every task back to the cloud, addressing a distinct hardware requirement where phones and laptops need to incorporate multiple functions on the same chip.

(The U.S. Department of Defense has signed contracts with Nvidia, Microsoft, Amazon Web Services, and Reflection AI to deploy artificial intelligence technologies on classified networks, following a disagreement with Anthropic over unrestricted access. The Pentagon wanted tools without conditions against mass surveillance and autonomous weapons, which Anthropic had imposed. More than 1.3 million defense personnel have used the GenAI.mil platform, primarily for non-classified tasks such as research, document preparation, and data analysis.)

Meta has signed an agreement to use millions of Amazon's proprietary Graviton processors for AI agent development, directing complex tasks toward central processing units rather than graphics processing units. The partnership channels Meta's spending toward the AWS ecosystem rather than competitors like Google Cloud, with which Meta previously signed a $10 billion agreement. Amazon CEO Andy Jassy emphasized in his letter to shareholders that enterprises are seeking optimal price-performance ratios in artificial intelligence, positioning the company to compete with Nvidia and Intel through both processors and Trainium chips designed for AI training.

Dell reorganized itself around AI over the past three years, anticipating a fundamental shift in how businesses operate. "Eighteen months ago we said, 'Holy sh-t, we need to move even faster,'" Lewis says. "The advancements were undeniable." The company's trajectory mirrors broader industry recognition that AI infrastructure represents the largest private technology buildout since the internet boom, with hyperscalers racing to deploy services now rather than years from now to reduce deployment risk and monetize AI products quickly.

Keywords

Sources

https://time.com/collection/time100-most-influential-companies/2026/dell-technologies/

Dell's transformation from commodity vendor to AI factory integrator with $50B projected server sales and 4,000 customer deployments

https://247wallst.com/investing/2026/05/01/the-big-4-hyperscalers-are-spending-710-billion-on-ai-heres-the-stock-that-profits-most/

Hyperscalers' collective $710B AI capex plans position Nvidia as direct beneficiary of largest private tech infrastructure buildout since internet boom

https://time.com/article/2026/04/27/time100-companies-hardware/

Qualcomm's strategy to move AI processing onto devices across smartwatches, PCs, cars, and data centers rather than cloud-dependent workloads

https://zamin.uz/en/technology/197625-meta-and-amazon-deal-expected-to-strike-at-nvidia-dominance.html

Meta's shift toward Amazon Graviton CPUs for AI agents signals growing demand for central processors over graphics units in specific workloads