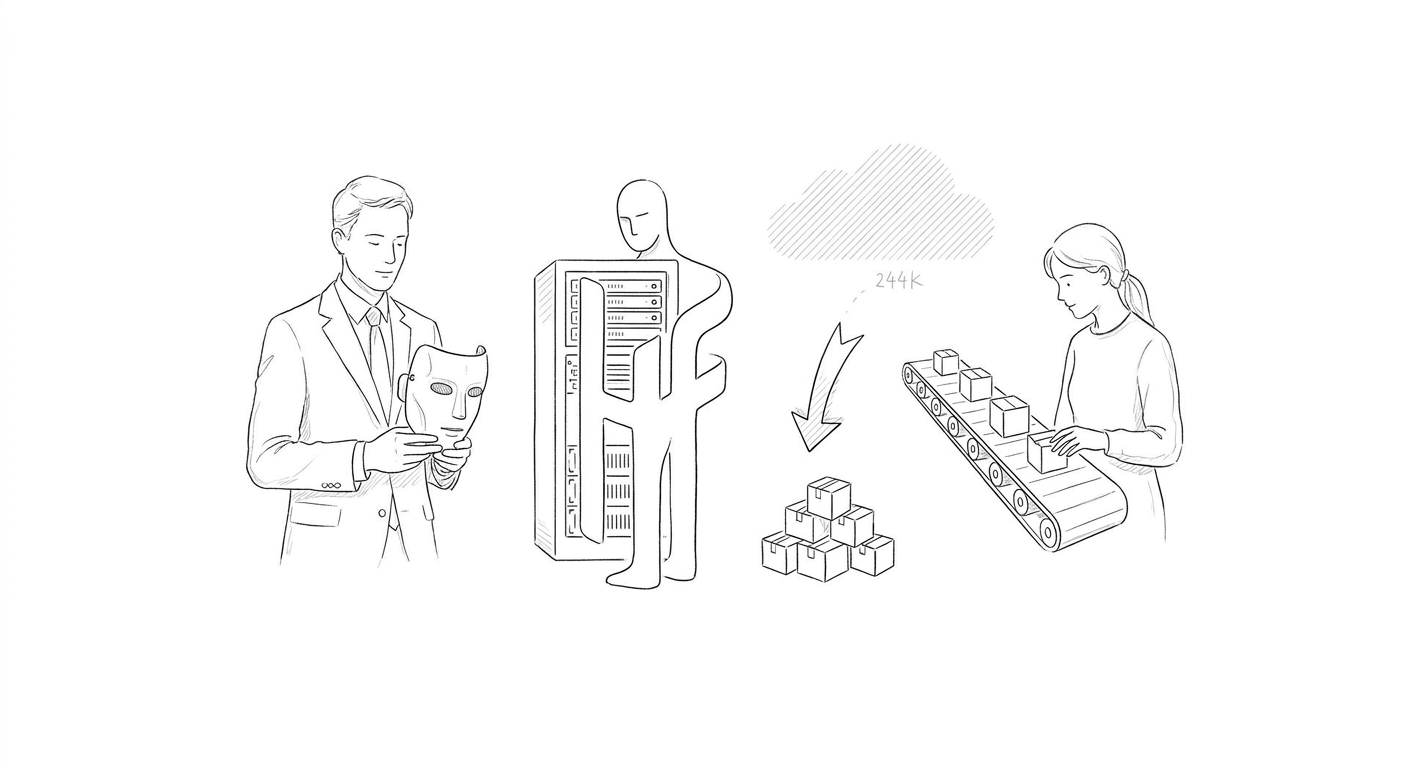

Fake OpenAI Model on Hugging Face Hits 244K Downloads in Supply Chain Attack

A malicious AI model impersonating an OpenAI release reached #1 trending on Hugging Face in under 18 hours, exposing public model registries as a new enterprise supply chain vulnerability.

A malicious AI model masquerading as an OpenAI release accumulated approximately 244,000 downloads and 667 likes on Hugging Face before being identified as malware, according to a security advisory. The repository reached the platform's #1 trending position in under 18 hours, with engagement numbers almost certainly artificially inflated to establish false legitimacy.

The fake model diverged from legitimate OpenAI projects in a critical detail: its README instructed users to execute start.bat on Windows or run python loader.py on Linux and macOS systems. These commands triggered malware deployment rather than model initialization, targeting developers and data scientists who routinely clone open-source models directly into corporate environments with access to source code, cloud credentials, and internal systems.

The incident marks a strategic shift in software supply chain attacks, with public AI model registries emerging as high-value targets. Enterprises increasingly pull open-weight models from platforms like Hugging Face into production environments, often with minimal vetting protocols designed for traditional software dependencies.

(The attack occurred as OpenAI separately launched Daybreak, a cybersecurity initiative combining frontier AI capabilities with Codex Security to help organizations identify and patch vulnerabilities. The European Commission also announced that OpenAI offered to provide open access to its cyber security features, though rival Anthropic has not yet made a similar commitment.)

Hugging Face has positioned itself as the dominant distribution platform for open-source and open-weight AI models, hosting thousands of repositories accessed by researchers and enterprises globally. The platform's rapid growth has outpaced security infrastructure, creating conditions where malicious actors can exploit trust mechanisms—trending rankings, download counts, and social proof indicators—to distribute compromised models at scale.

The attack surfaces a broader tension in AI development between accessibility and security. While open model registries democratize access to AI capabilities, they also concentrate supply chain risk in centralized platforms that lack the mature security ecosystems surrounding traditional software package managers. Unlike established repositories with decades of hardening, AI model platforms operate without standardized verification protocols for model provenance or execution safety.

Keywords

Sources

https://www.csoonline.com/article/4169407/malicious-hugging-face-model-masquerading-as-openai-release-hits-244k-downloads.html

Frames public AI model registries as emerging software supply-chain risk for enterprises with corporate environment access

https://thehackernews.com/2026/05/openai-launches-daybreak-for-ai-powered.html

Covers OpenAI's Daybreak cybersecurity initiative launch alongside broader vulnerability detection landscape

https://letsdatascience.com/news/mini-shai-hulud-malware-compromises-open-source-packages-288fd254

Reports Mini Shai-Hulud supply-chain attack compromising hundreds of open-source packages including TanStack and MistralAI

https://cyberscoop.com/ai-autonomous-cyber-capability-benchmarks-broken-gpt5-claude-mythos/

Contextualizes attack within broader AI security evaluation frameworks and autonomous cyber capability benchmarks