Health-LLM Framework Bridges Wearable Data and Clinical Prediction as FDA Tightens Oversight

Researchers position context engineering over model scale as medical AI shifts from general assistants to specialized agents interpreting sensor streams, while regulatory pressure mounts.

A new framework for deploying large language models in clinical settings is gaining traction among medical device developers, emphasizing context engineering and retrieval-augmented architectures over raw computational power as the pathway to regulatory approval and real-world efficacy.

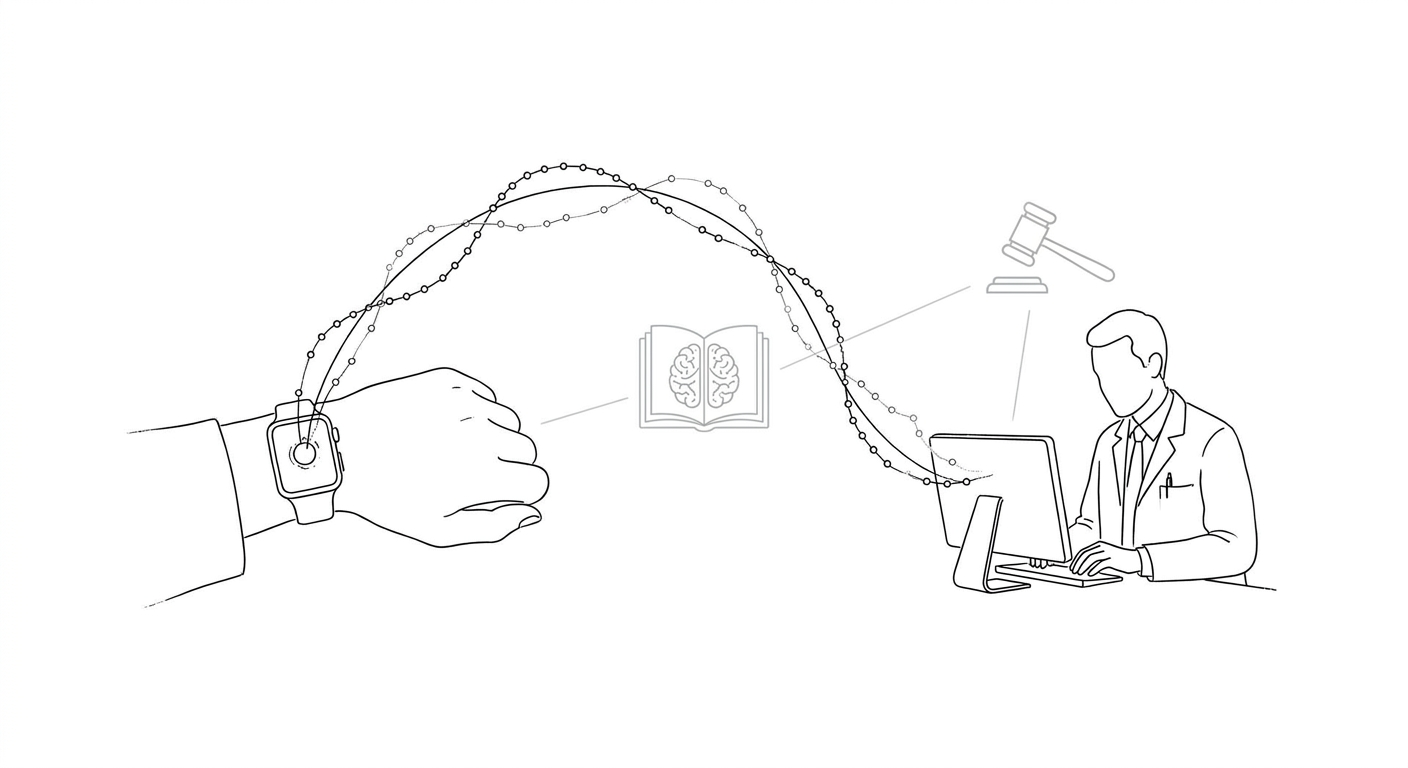

The Health-LLM approach, detailed in research from MIT and collaborators, focuses on interpreting continuous wearable sensor data to generate health predictions—a departure from the text-based diagnostic tasks that have dominated medical AI benchmarks. The framework pairs LLMs with adaptive collaboration protocols, enabling multiple models to negotiate clinical decisions rather than relying on a single monolithic system. A related project, MDAgents, demonstrated how ensembles of specialized models can outperform individual general-purpose systems on medical challenge problems.

Partha Anbil, senior vice president of life sciences at Coforge Limited, argued that "AI capability isn't competence" and that the clinical deployment gap remains wide despite benchmark performance gains. His analysis highlighted context engineering—the deliberate structuring of prompts, retrieval databases, and domain-specific fine-tuning—as the most actionable lever for digital health product teams navigating FDA scrutiny.

(The U.S. Food and Drug Administration's AI/ML Action Plan and Software as a Medical Device guidance now emphasize transparency and continuous monitoring, requiring manufacturers to document how models handle edge cases and drift over time.)

The regulatory environment is tightening in parallel with technical advances. FDA's 2021 SaMD Action Plan established a framework for adaptive algorithms that learn post-deployment, but implementation has lagged as manufacturers struggle to balance innovation velocity with safety documentation. The Health-LLM architecture's modular design—separating retrieval, reasoning, and decision layers—may offer a compliance advantage by isolating components subject to regulatory review.

Meanwhile, the broader LLM ecosystem is fragmenting along use-case lines. Generic models like ChatGPT and Claude continue to serve millions seeking ad hoc mental health guidance, but specialized medical LLMs remain largely in development and testing stages. The gap between consumer-facing chatbots and clinically validated tools has widened as healthcare institutions demand evidence of performance in high-stakes scenarios, not just conversational fluency.

The shift toward wearable-data interpretation also reflects a strategic pivot in digital health. Traditional diagnostic AI focused on static inputs—radiology images, lab results, genomic sequences. Wearables generate continuous, noisy streams that require temporal reasoning and personalized baselines, tasks where LLMs' pattern-matching strengths may prove advantageous. Early results suggest that retrieval-augmented generation, which grounds model outputs in curated medical literature, reduces hallucination rates compared to end-to-end generative approaches.

Competing frameworks are emerging from academic and industry labs. AutoGen, a multi-agent conversation system developed by Microsoft Research and collaborators, offers an alternative architecture for orchestrating LLM ensembles. MedRAG, a benchmarking suite for retrieval-augmented medical models, has become a de facto standard for evaluating context-engineering techniques. These tools reflect a consensus that medical AI's next phase will hinge on hybrid architectures blending neural networks, symbolic reasoning, and curated knowledge bases.

The clinical AI landscape now features a tension between speed and rigor. Startups and device manufacturers face pressure to ship products quickly as competitors race to capture market share, yet regulatory agencies are slowing approvals to scrutinize black-box decision-making. The Health-LLM framework's emphasis on interpretability and modularity may represent a compromise, offering a path to deployment that satisfies both commercial timelines and safety mandates.

Keywords

Sources

https://www.mddionline.com/artificial-intelligence/how-large-language-models-are-reshaping-health-prediction-clinical-decision-making

Frames context engineering as the key deployment lever and highlights the FDA's evolving SaMD guidance for adaptive algorithms.

https://www.nature.com/articles/s41598-026-52801-3

Demonstrates LLM-assisted hyper-heuristic optimization for time-series prediction, validating generalizability across variable types.

https://www.forbes.com/sites/lanceeliot/2026/05/09/generative-ai-such-as-chatgpt-can-help-cope-with-impulse-control-issues/

Notes that generic LLMs serve millions for mental health guidance but specialized medical models remain in testing stages.

https://www.nature.com/articles/s41598-026-48924-2

Provides technical grounding on precision, recall, and F1-score metrics critical for evaluating medical AI performance.