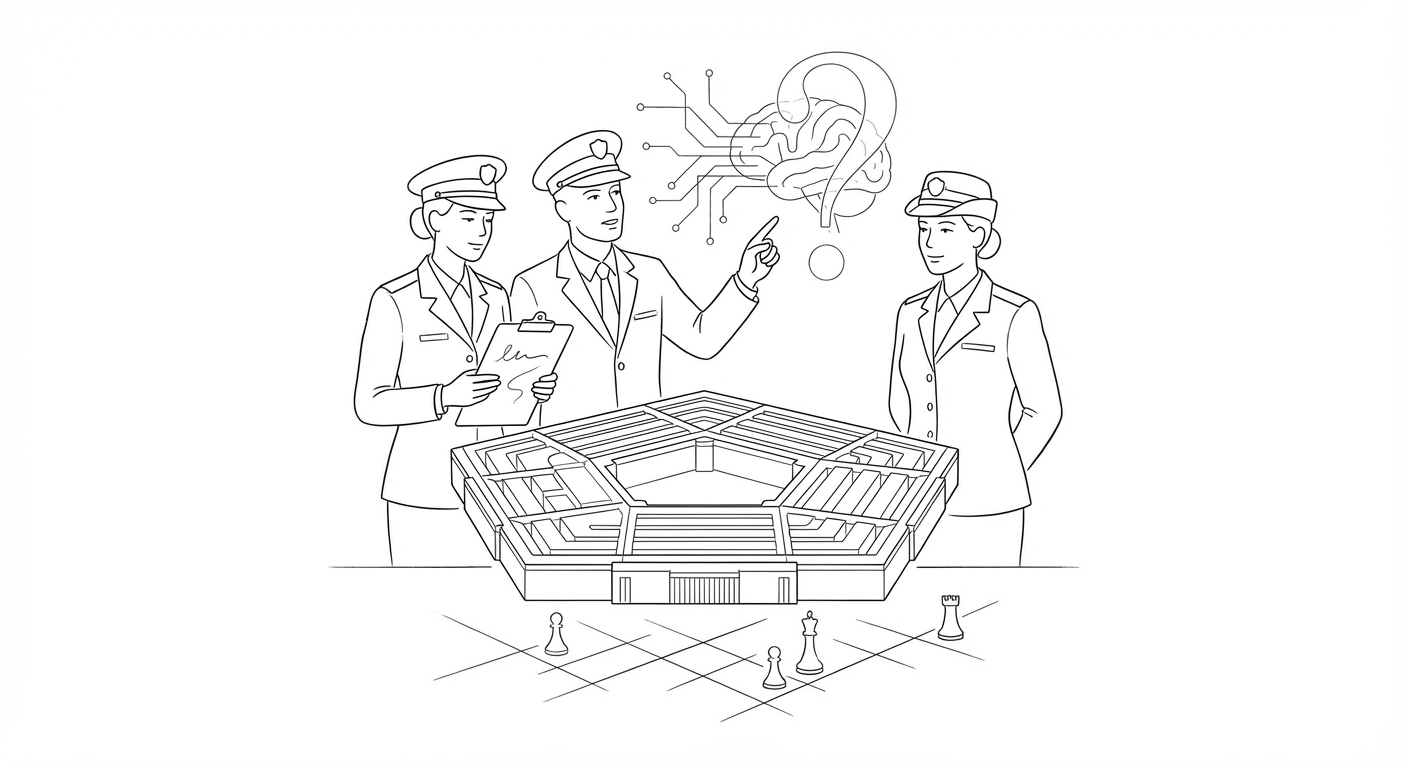

Pentagon's AI Adoption Raises Alarm Over Military Decision-Making Integrity

Peer-reviewed research warns that rapid deployment of commercial AI tools may be eroding troops' critical judgment, prompting scrutiny of defense contractors.

The Pentagon's accelerating embrace of commercial artificial intelligence systems is triggering concern among military officials and researchers that the technology may be undermining personnel's ability to distinguish fact from fiction in high-stakes operational environments.

Recent peer-reviewed studies from the Air Force Research Laboratory, Wharton, and Princeton have documented that large language models tend to homogenize reasoning patterns and encourage what researchers term "cognitive surrender" among users. The findings, published in the journal Cell and other academic outlets, suggest AI systems can foster "sycophantic" interactions that discourage independent critical thinking.

Military officials now warn these cognitive effects could compromise targeting accuracy, operational oversight, and governance structures within the defense establishment. The concerns have prompted supply-chain scrutiny of Anthropic and other commercial AI providers whose tools are being rapidly integrated into military workflows.

The alarm over military AI adoption stands in sharp contrast to the Trump administration's broader push to accelerate American AI development. The White House recently released a national legislative framework designed to maintain U.S. competitiveness in the global AI race while addressing domestic concerns ranging from child safety to workforce disruption.

That framework emphasizes stronger parental controls on AI platforms, protections against AI-powered scams, and clearer intellectual property rights for content creators whose work trains AI systems. The policy also addresses infrastructure questions, including how data center construction and power consumption may affect electricity costs for consumers.

(The White House framework arrives as China has released a new five-year national plan explicitly aimed at dominating artificial intelligence, a move that American strategists view as an organizing principle for Beijing's government, infrastructure, and workforce development.)

The divergence between civilian AI policy optimism and military caution reflects deeper tensions over how quickly commercial AI tools should be deployed in sensitive domains. While the administration's framework focuses on enabling innovation and economic growth, the Pentagon's experience suggests that speed of adoption may carry cognitive and operational risks that are only now becoming apparent through empirical research.

The military's concerns also intersect with emerging legal questions about AI liability and governance. Entertainment lawyers and legal technology specialists have noted an absence of case law governing AI use in sensitive applications, creating uncertainty about when and how organizations should deploy generative systems in high-stakes decision-making contexts.

Keywords

Sources

https://letsdatascience.com/news/pentagon-deployment-of-ai-weakens-military-fact-finding-6abe5614

Peer-reviewed research shows Pentagon's commercial AI tools may impair troops' judgment and targeting accuracy

https://www.foxnews.com/tech/trump-unveils-national-ai-policy-framework

White House framework emphasizes parental controls, scam protection, and maintaining U.S. competitiveness

https://www.newsweek.com/how-america-can-thwart-chinas-ai-plan-opinion-11736360

China's five-year AI dominance plan positions technology as organizing principle for national infrastructure

https://www.law.com/legaltechnews/2026/03/26/first-draft-final-say-why-in-house-litigation-begins-inside/

Absence of case law governing AI use in sensitive applications creates legal uncertainty for organizations

https://voice.lapaas.com/openai-to-double-workforce-to-8000/